How XY Builds an AI Agent Orchestration Platform for Healthcare with Temporal

XY Engineering Team

April 23, 2026

Reading Time12 mins

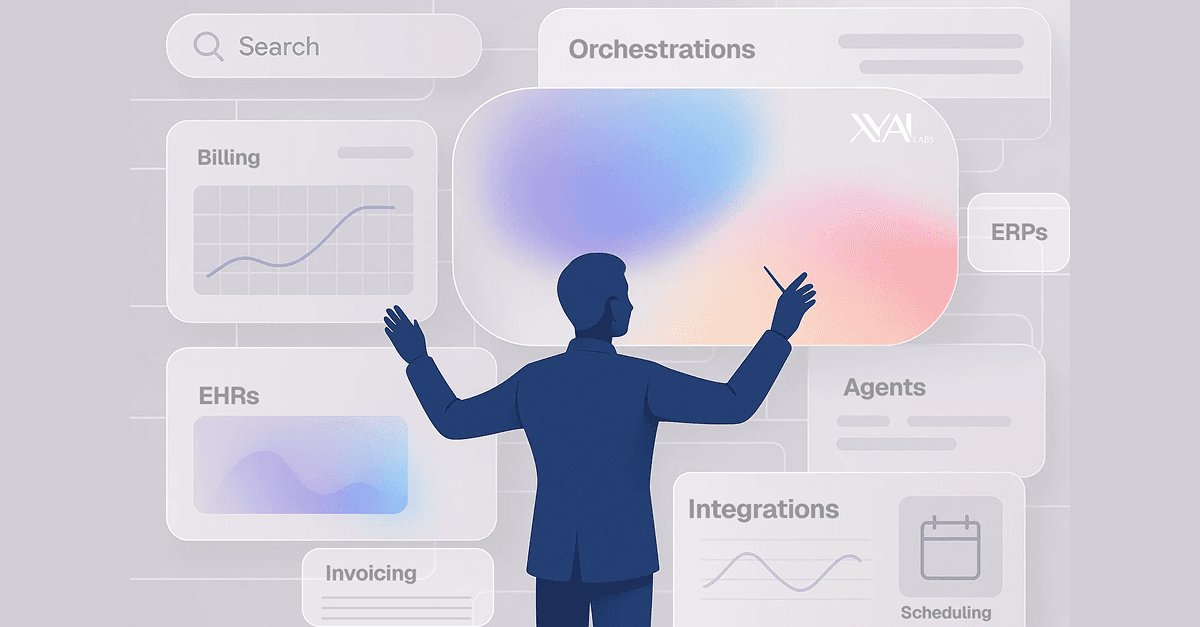

A single insurance claim can touch half a dozen systems before it's resolved: an EHR, a clearinghouse, a document extraction service, an LLM, a human reviewer, and a billing platform, each with its own failure modes, latency profile, and data sensitivity constraints. Multiply that by thousands of claims per day, across dozens of healthcare organizations, each with different systems and business rules, and you have a coordination problem that breaks most automation approaches.

At XY, we set out to solve this: an AI agent orchestration platform that automates complex healthcare workflows end-to-end. The question was never whether to automate, but how to build an orchestration layer robust enough to trust with healthcare operations. Temporal turned out to be the answer, and in this post, we'll show exactly how we use it: as a DSL-driven execution engine for agentic workflows, powered by a YAML-based workflow language built on top of a single generic Temporal workflow class, with infrastructure patterns that let us scale from prototype to production.

The Problem: Why Healthcare Breaks Conventional Orchestration

Before diving into our solution, it's worth understanding why healthcare workflows are so punishing for automation. Most orchestration tools were designed for predictable request-response patterns. Healthcare doesn't work that way:

- Multi-step, multi-system: A single claim processing workflow might touch an EHR system, a clearinghouse API, a document extraction service, an LLM for structured data extraction, a human reviewer for edge cases, and a billing system for final submission, all in sequence, with branching logic at every step.

- Reliability is non-negotiable:When you're processing thousands of insurance claims or patient records in a batch, a single dropped task means revenue loss or compliance risk. You need guaranteed delivery, automatic retries for transient failures, and clear observability into what happened and when.

- Human-in-the-loop is the norm, not the exception: Healthcare AI can't be fully autonomous. Workflows regularly pause for human review: a clinician verifying an AI prediction, an analyst approving a batch action, or a credential refresh that requires manual intervention. The orchestrator needs first-class support for long-running waits.

- Data sensitivity:PHI (Protected Health Information) can't just flow freely through every system. We need architectural patterns that keep sensitive data out of places it shouldn't be, while still enabling complex data pipelines.

- Every customer is different: Each healthcare organization has unique systems, APIs, credential requirements, and business logic. The platform needs to support arbitrary workflow compositions without requiring engineering effort for each new customer.

We learned all of this the hard way. Our first-generation system used internal queues with a polling mechanism that translated YAML configs into DAGs and dispatched work through message queues. State management was manual, retries were hand-coded, and debugging required tailing log files across multiple services. It worked, until it didn't.

The cracks showed in two places. On the throughput side, we had no concept of dedicated task queues with specialized workers polling them. Every activity, whether a lightweight database lookup or a resource-intensive browser automation session, competed for the same pool of consumers. We couldn't right-size infrastructure for different workload profiles, and we couldn't scale one type of work without over-provisioning everything else.

On the engineering velocity side, every new workflow pattern required custom orchestration code. Worse, we couldn't easily version our orchestrator. Evolving the execution model meant risking every in-flight workflow. There was no clean separation between the orchestration layer and the business logic it executed, so changes to one threatened the stability of the other.

We needed an orchestration primitive that was fundamentally more powerful.

Architecture Overview: A YAML DSL on Top of Temporal

The breakthrough came from a simple observation: if every healthcare workflow is fundamentally a composition of the same building blocks (API calls, LLM inferences, human reviews, data transformations), then why write a new Temporal workflow class for each one?

Instead, we built a YAML-based Domain Specific Language and a single generic DSLWorkflow class that interprets it at runtime. One workflow class to rule them all:

┌─────────────────────────────────────────────────────────────────┐

│ Workflow Definition (YAML) │

│ Defines steps, control flow, and activity parameters │

└───────────────────────────────┬─────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────────┐

│ DSL Parser │

│ Validates YAML, merges runtime inputs → typed DSLInput model │

└───────────────────────────────┬─────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────────┐

│ Workflow Instance (DSLWorkflow) │

│ A single generic Temporal workflow that interprets the DSL: │

│ sequence · parallel · forEach · childWorkflow · branching │

│ │

│ Dispatches each activity to the appropriate task queue │

└───────────────────────────────┬─────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────────┐

│ Temporal Server │

│ Schedules activities, manages retries, tracks execution state │

└────────────┬───────────────────┬───────────────────┬────────────┘

│ │ │

▼ ▼ ▼

┌────────────┐ ┌────────────┐ ┌────────────┐

│ Worker │ │ Worker │ │ Worker │

└────────────┘ └────────────┘ └────────────┘This means a workflow designer (or our AI Planner Agent) can define a complex multi-step healthcare automation as YAML, and the platform executes it on Temporal without any custom orchestration code, cleanly separating workflow logic from business logic.

The Planner: AI-Powered Workflow Generation

Here's where things get interesting. Because the DSL is declarative YAML with well-defined semantics, it becomes a natural target for LLM generation. The entry point for workflow creation is our Planner Agent, an LLM-powered service that translates natural language requests into executable YAML.

Here's how it works:

- A user describes what they want: "Process incoming lab results from Spruce, extract patient info, match against our EHR, and upload the report."

- The Planner determines what activities are needed and passes them to our Activity Factory, which generates each activity's implementation on the fly and seeds it into our database.

- Each generated activity is run through sandbox validation and security checks, verifying functional correctness and ensuring no unsafe operations, unauthorized data access, or PHI handling violations before the activity is promoted.

- The LLM then assembles a complete DSL YAML definition wiring the validated activities into the appropriate execution order.

The Workflow Builder Service then takes the user through step-by-step testing, building partial YAML (steps 1 through N) and running each prefix as a real Temporal workflow to verify correctness. If a step fails, the user can reject it and the Planner regenerates that step with error context. Once all steps pass, the workflow is promoted to a production WorkflowDefinition.

The result is a remarkably short path from intent to production: natural language → AI-generated activities → sandbox validation & security checks → YAML assembly → production deployment, all without writing Python.

This is only possible because Temporal is code-first. Most workflow orchestration tools force you into their execution model. Because we own the abstraction layer entirely, our DSL can express any workflow topology that Temporal can execute, and Temporal can execute just about anything.

Example Activity: A Single Unit of Work

- activity:

name: extract_structured_data

params:

document_url: ${{ document_url }}

extraction_schema: ${{ schema_type }}

result: extracted_data

timeout_minutes: 10

max_retries: 3

retry_backoff_coefficient: 2.0The Activity Factory and Integration Layer

This is where Temporal's value as scaffolding for agentic AI becomes most apparent. Temporal gave us more than a runtime; it gave us a vocabulary. The concepts of activities, workflows, task queues, signals, and retries provided a shared language that aligned our engineering and product teams from day one. When a product manager says "this activity should retry three times with backoff," they're speaking directly in Temporal primitives. When an engineer says "this needs its own task queue," everyone understands the infrastructure implication. That alignment has been one of the biggest accelerators for iteration speed.

The Activity Factory is how the platform executes arbitrary business logic. When a workflow needs to interact with a customer's specific systems (their EHR, their clearinghouse, their internal tools), the factory assembles the right activity at runtime from the customer's subset of pre-configured integrations, the capability they need, and the parameters the workflow provides. This is layered on top of a two-tier resolution system:

- Static registry (fast path): A compiled dictionary of shared system activities and commonly-used domain activities, registered with the Temporal worker at startup.

- Dynamic factory (extensibility path): Database-backed

ActivityDefinitionrecords scoped per organization, with code, integration dependencies, and parameter schemas. At runtime, the activity router checks the static registry first, then falls back to the dynamic factory. This lets customers extend the platform without code deployment.

Continue-as-New: Infinite Batch Processing

Healthcare operates at scale that surprises people outside the industry. A single payer's claims run, an enrollment period's patient roster, a document backlog: these are routinely thousands of items. Temporal's workflow history has practical limits, and processing thousands of records in a single execution would exceed them.

We built a deferred continue-as-new (CAN) mechanism that's transparent to the YAML author:

- After each activity completion, the workflow checks Temporal's built-in

is_continue_as_new_suggested()flag - When Temporal signals it's time to roll over, the workflow sets a

_can_pendingflag - Active forEach controllers gracefully drain, stopping new items and waiting for in-flight children to complete

- Each controller snapshots its state:

next_idx,completed_count,in_flight_children - The workflow calls

workflow.continue_as_new()with accumulated state - On resume, the new execution skips already-completed elements and picks up where it left off

Infrastructure: Scaling Precisely Where It Matters

One of the most impactful benefits of Temporal has been a clean answer to a question that plagues every platform team: how do you scale heterogeneous workloads without over-provisioning? The answer is that Temporal decouples compute from orchestration. Temporal Cloud handles workflow state, history, and task routing. Our workers are pure compute, stateless containers that poll task queues. This separation enables precise infrastructure optimization.

Task Queue Specialization

We run multiple task queues with purpose-built workers:

┌───────────────────────────────────────────────────────┐

│ Temporal Cloud │

│ │

│ ┌─────────────┐ ┌──────────────┐ ┌─────────────┐ │

│ │generic-queue│ │browser-queue │ │ gpu-queue │ │

│ └──────┬──────┘ └──────┬───────┘ └──────┬──────┘ │

└─────────┼────────────────┼──────────────────┼─────────┘

│ │ │

▼ ▼ ▼

┌──────────────┐ ┌────────────────┐ ┌──────────────┐

│ CPU Workers │ │ Browser Workers│ │ GPU Workers │

│ 3 replicas │ │ 4 replicas │ │ On-demand │

│ 4GB RAM each │ │ 10GB RAM each │ │ T4/A100 GPU │

│100 activities│ │ 8 browsers ea. │ │ ML inference │

└──────────────┘ └────────────────┘ └──────────────┘- Generic workershandle the majority of activities: database operations, API calls, LLM inference via hosted APIs. They're lightweight (4GB RAM), run 100 concurrent activities, and scale horizontally with zero state.

- Browser workers run headless Chrome with our browser extension for web automation, logging into healthcare portals, extracting data from web interfaces, and downloading documents. They need 10GB RAM (Chrome is hungry), run at most 8 concurrent browser instances (semaphore-controlled), and use a shared volume for session persistence across restarts.

- GPU workers (on-demand) handle ML inference workloads: document OCR, custom model inference, embedding generation. They scale to zero when idle and spin up on demand.

The YAML DSL routes activities to the right queue with a single field:

- activity:

name: run_browser_workflow

task_queue: browser-queue # Routes to browser workers

params:

code: |

navigate(url="https://portal.example.com")

extract_table(selector="[name='claimsTable']")Autoscaling Based on Queue Depth

Because Temporal workers are stateless (they just poll a queue, execute activities, and report results), autoscaling is straightforward. We monitor queue depth per task queue and scale replicas independently:

- Queue depth increasing on

browser-queue? Add browser worker replicas. generic-queueempty? Scale down to minimum replicas.- Batch job submitted with 2,000 items? Burst generic workers to handle throughput.

There's no shared state between workers. Any worker on the same queue can pick up any task on the queue they poll. Workers can restart, crash, or scale without affecting in-progress workflows; Temporal retries the activity on another worker automatically.

Customer Namespace Isolation

Temporal Cloud's namespace model maps cleanly to our multi-tenant architecture. We run separate namespaces for each environment (dev, staging, prod), and the architecture supports per-customer namespaces for organizations that require strict compute isolation. Their workflows execute on dedicated workers polling a customer-specific queue within their namespace, with no resource contention from other tenants.

Cost Efficiency

The infrastructure story comes together financially:

- Temporal Cloud: Pay per action (workflow starts, activity completions, signals). No idle costs for workflow state. Predictable, linear scaling.

- Workers: Standard container orchestration (Docker Compose in dev, Kubernetes in production). Scale to zero when idle. Right-size per queue type.

- No over-provisioning: Browser workers are expensive (10GB RAM, Chrome licenses), but they only run when browser activities are queued. Generic workers are cheap and plentiful.

The combined result is that we pay proportionally to the work we do, with no fixed infrastructure overhead for idle capacity.

What's Next: Evolving the DSL with Confidence

The question we get most often is: "What happens when you need to change the DSL?" It's the right question, because in healthcare, you can't break workflows that are actively processing claims. One of the things we're most excited about is how Temporal positions us to evolve our DSL without fear of breaking backwards compatibility. Because every WorkflowDefinition captures the YAML definition, the activity set it was built against, and the DSL version it targets, we have full provenance for every workflow in production. When we add new statement types (conditional branching expressions, retry-at-the-DSL-level, or new composition primitives), existing workflows continue to execute against the DSL version they were authored for. New workflows pick up the latest capabilities.

This is a direct consequence of Temporal's architecture. The DSLWorkflow class is versioned code. The activity registry is a known set. The YAML is an immutable artifact. We can ship DSL v2 while v1 workflows are still running in production, and Temporal's deterministic replay guarantees mean in-flight workflows won't break. That kind of safe evolution is incredibly hard to achieve with a bespoke orchestrator, and it's something we get essentially for free from Temporal's execution model.

XY is a healthcare AI agent orchestration platform. We use Temporal Cloud to power reliable, scalable, and compliant automation workflows for healthcare organizations. Learn more at xy.ai.

Book a Demo

See how AI Agents can transform your operations

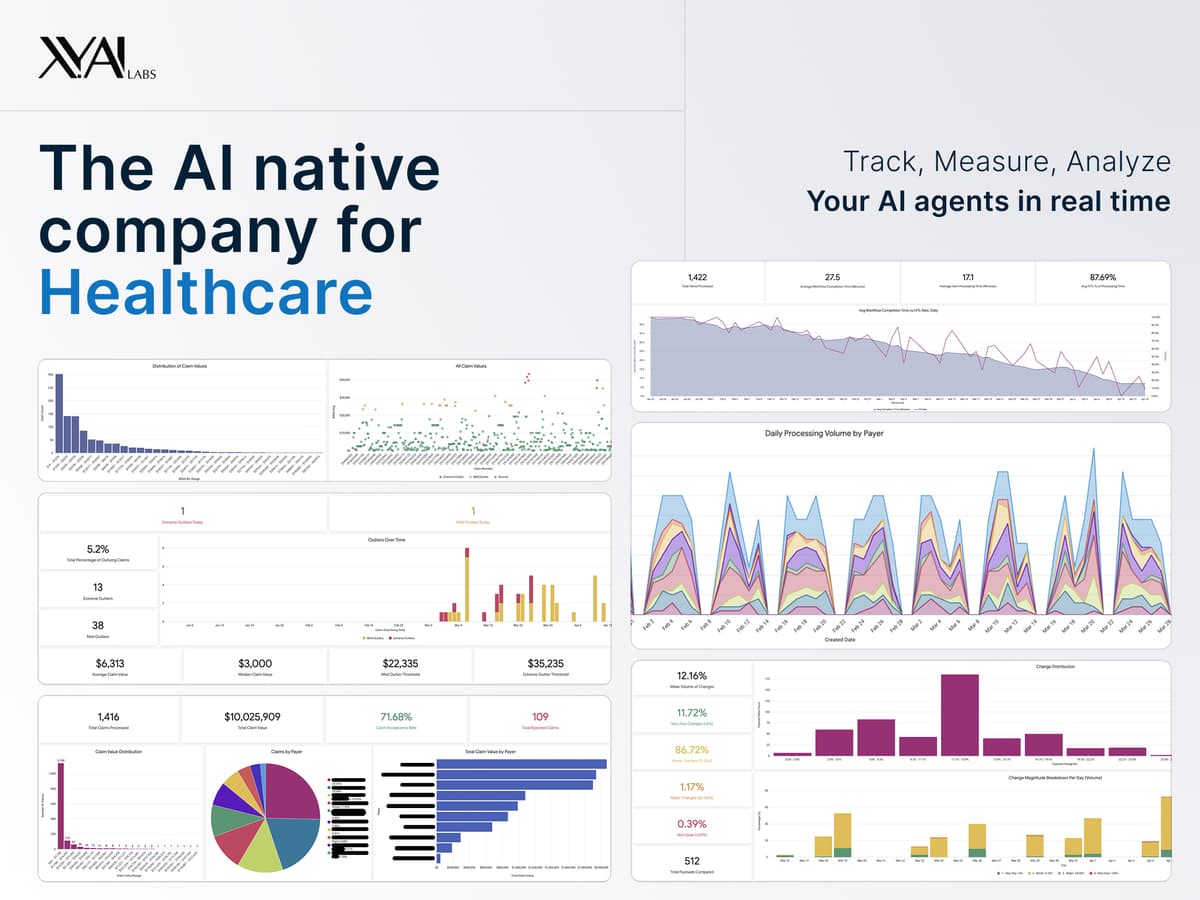

Are You Still Running Your RCM in the Dark?

XY.AI Labs Team

April 15, 2026

Reading Time4 mins

The Missing Layer in Healthcare AI: Execution

Sam De Brouwer

March 11, 2026

Reading Time5 mins

Are You Automating Jobs or Redesigning Work?

XY.AI Labs Team

February 21, 2026

Reading Time4 mins

How to Choose the Right AI Partner for Your Healthcare Operations

XY.AI Labs Team

February 5, 2026

Reading Time5 mins

Have We Been Here Before? A Thought on AI Infrastructure

Sam De Brouwer

January 29, 2026

Reading Time5 mins

Finally, Healthcare Is Becoming a Learning System with AI as its Catalyst

Sam De Brouwer

December 19, 2025

Reading Time7 mins

Connect Healthcare Systems with Agentic AI

XY.AI Labs Team

November 24, 2025

Reading Time8 mins

You love LLMs and co-pilots? You'll love AI Agents even more.

Sam De Brouwer

November 13, 2025

Reading Time10 mins

Why I'm Building for the Overlooked Majority of Healthcare

Sam De Brouwer

November 10, 2025

Reading Time6 mins

From Code to Care: How Zero-Cost Software Is Reshaping Healthcare

Sam De Brouwer

October 13, 2025

Reading Time8 mins

From Clicks to Care: Reinventing Healthcare Workflows with Our XY.AI Multimodal Browser Agents

Scott Cressman

September 12, 2025

Reading Time5 mins

Tough conversations about success and failure are not new in AI

Sam De Brouwer

August 28, 2025

Reading Time3 mins

9 Real-World Applications of AI Across Industries

XY.AI Labs Team

August 24, 2025

Reading Time10 mins

10 Benefits of Artificial Intelligence in Healthcare

XY.AI Labs Team

August 23, 2025

Reading Time10 mins

Three Reports, One Message: Give Time Back to Care

XY.AI Labs Team

August 22, 2025

Reading Time2 mins

What Free Compute Signals About a Startup like XY.AI Labs?

Sam De Brouwer

August 14, 2025

Reading Time4 mins

What We're Learning From Our Latest Integrations

Sam De Brouwer

July 31, 2025

Reading Time6 mins

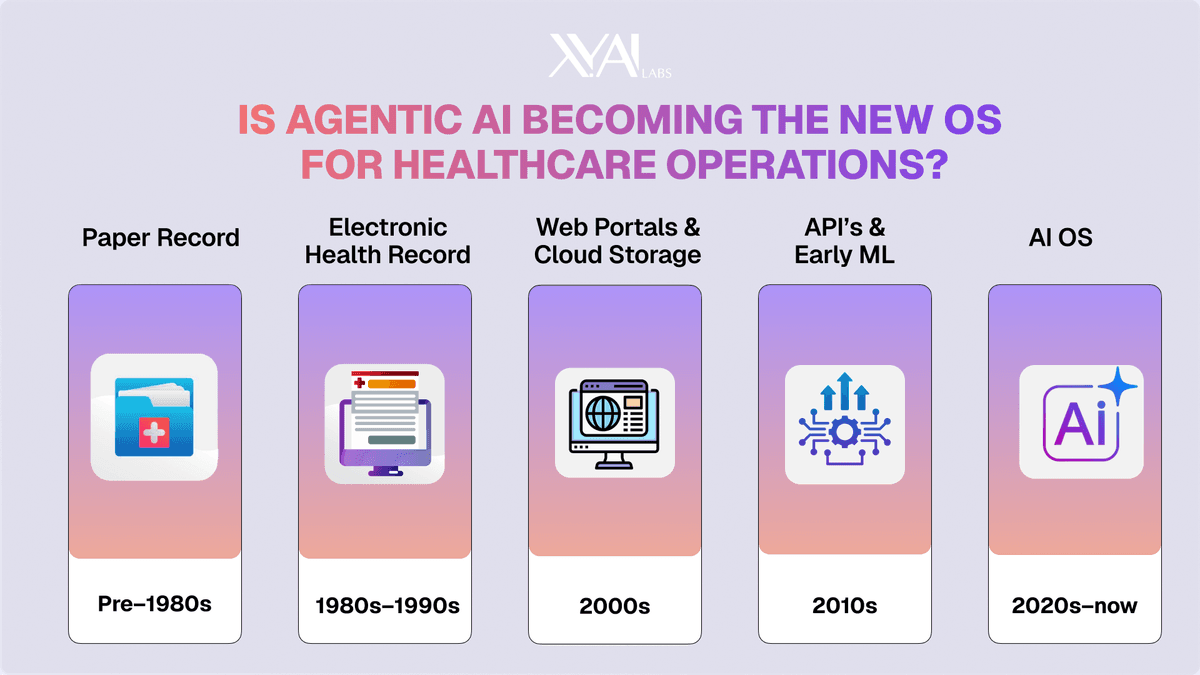

Is Agentic AI Becoming the New OS for Healthcare Operations?

Sam De Brouwer

July 10, 2025

Reading Time4 mins

9 AI Trends To Transform Healthcare and Medicine And Why They're Closer Than You Think

XY.AI Labs Team

June 10, 2025

Reading Time5 mins

What I am Learning on the Front Lines of RCM in Healthcare - and Why We Can't Ignore Automation Any Longer

Sam De Brouwer

May 6, 2025

Reading Time8 mins

AI Agents in Healthcare: The Smart Workforce You Didn't Know You Could Have

Scott Cressman

April 17, 2025

Reading Time8 mins

15 Years at the Edge of AI and Healthcare - and Why Everything has Changed

Sam De Brouwer